Using GenAI / LLM tools is becoming the norm for all aspects of software development, including coding, testing, and platform engineering. The team at DORA used the outcomes of their 2025 research on AI-assisted software development to create an AI Capabilities Model. We've been asking ourselves this question: How can we, as quality and testing leaders, help our teams apply these capabilities to continually improve process and product quality?

AI is an amplifier

The overarching conclusion of DORA's AI research is one to keep in your mind at all times: "AI's primary role in software development is that of an amplifier". Research by DORA and others shows that AI is an amplifier. Organizations that are proactively building in quality, nurturing a healthy, psychologically safe culture, letting people do their best work experience big benefits when using AI tech wisely.

Organizations that try to deliver huge batches of new code at once create even testing bottlenecks with AI. Their AI agents generate volumes of code that no human testers can review effectively. When team members are afraid to experiment, fail and make problems visible, they experience even poorer performance when trying to adopt AI. AI amplifies dysfunction.

As the capabilities model report says, " The greatest returns come not from the tools themselves, but from investing in the foundational systems that enable success." We see this message of amplification and augmentation consistently in research by various development and testing organizations, such as Beyond Quality and DX.

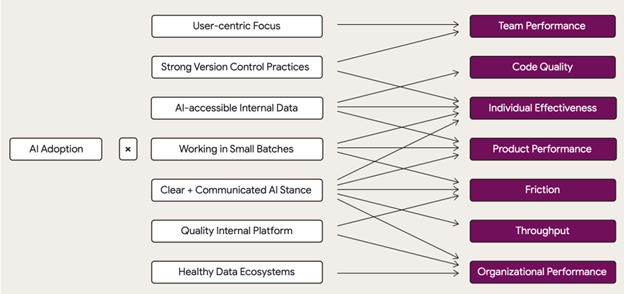

The DORA researchers identified seven foundational capabilities that let teams improve by using AI assistance (see the model illustration below). Take "working in small batches" as an example. Teams working in a waterfall mode feed AI agents large amounts of specifications at once. They can't keep up with reviewing the resulting code. They lose confidence, and their product becomes even less reliable, and their performance gets even worse. When Teams who already practice working on one small batch of changes at a time adopt AI, they can feel confident about the small amounts generated production code. The AI tools help them go faster.

How quality and testing professionals can contribute

The capabilities listed in the model (see below) are familiar to testing and quality practitioners. Quality engineers use visual frameworks and real-time collaboration to lead effective conversations as they plan each new feature. This lets the team identify risks and prioritize what is most valuable to customers. Teams can then slice it into small, testable stories, that helps the team work one small batch at a time. Building shared understanding of customer needs for each new change enables the humans to provide the correct expected behavior to the AI agents that build the code. Feedback cycles speed up and people can focus on the knowledge work.

Communication is a core practice for building in quality. If their organization doesn't have clear policies for how to use AI, teams get into trouble. Some testers and developers may believe they shouldn't use AI tools at all, missing out on the potential benefits. Others may use AI technology without knowing the potential dangers, such as releasing private data to the cloud.

Testing professionals can help lead the effort to build and communicate that clear organization-wide stance on how to AI-assisted development. They can make sure those guidelines cover testing activities as well. Test the AI policies - are they clearly documented? Can everyone access them easily? High-quality internal documentation also applies to data, and that data now needs to be accessible to the AI tools your teams agree to use.

We (Janet and Lisa) are always harping on the need to build relationships, both within and outside of your teams. Those relationships enable you to collaborate with platform engineers and other team members to build reliable delivery workflows. Together, the team can agree on strategies for safe release strategies that allow quick rollback of problematic changes.

A holistic approach to using AI assistance

Holistic testing is all about the whole team owning quality and collaborating in testing activities all the way around the infinite loop of software development. You may feel overwhelmed by all the AI hype. Yet, the same principles apply.

Keep starting those good conversations, using visual models and real-time collaboration tools, and include everyone involved in creating and delivering your product. Apply the holistic testing model to plan a testing strategy that takes advantage of AI tools, and avoids the many pitfalls of using them. Remember that these tools will amplify both the good and the bad. Use frequent retrospectives to keep a close eye on what AI assistance